by Wes Hackett | Jun 23, 2017 | AddIn365, AI, App Services, Azure, Bot Framework, Bots, Compose extensions, Development, Event, Featured, Microsoft Teams, Office365, QnAMaker, SPSLondon, Tabs, Visual Studio

Microsoft Teams is part of the Office 365 collaboration portfolio and combines many of the Office 365 services in a chat based workspace. Microsoft Teams also has many extensibility points and this series will explore them. We’ll use a fictional example and build it...

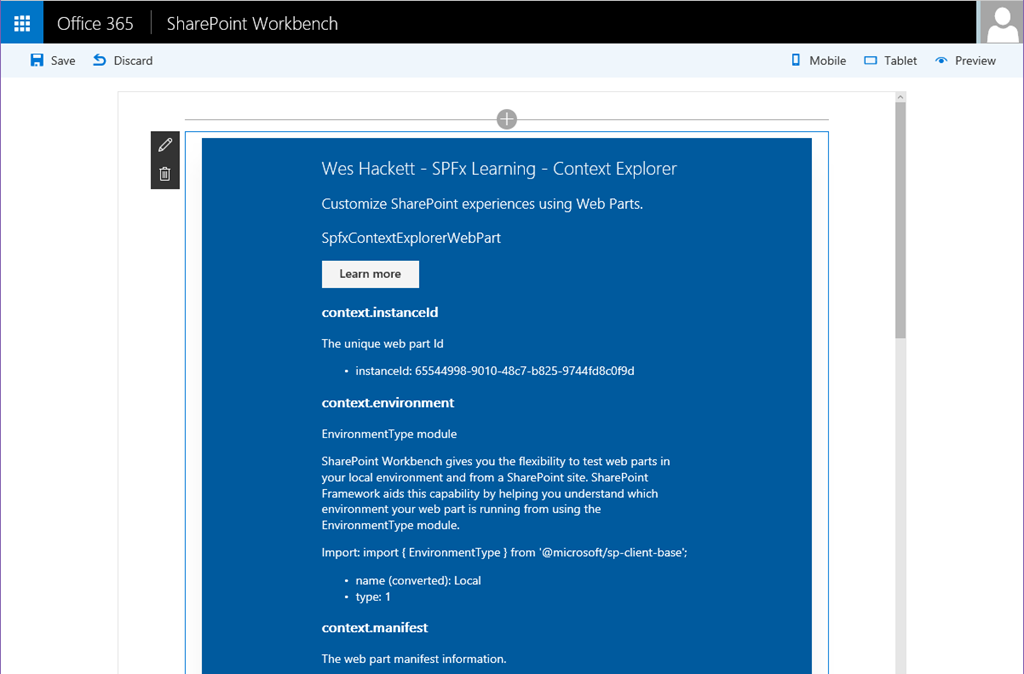

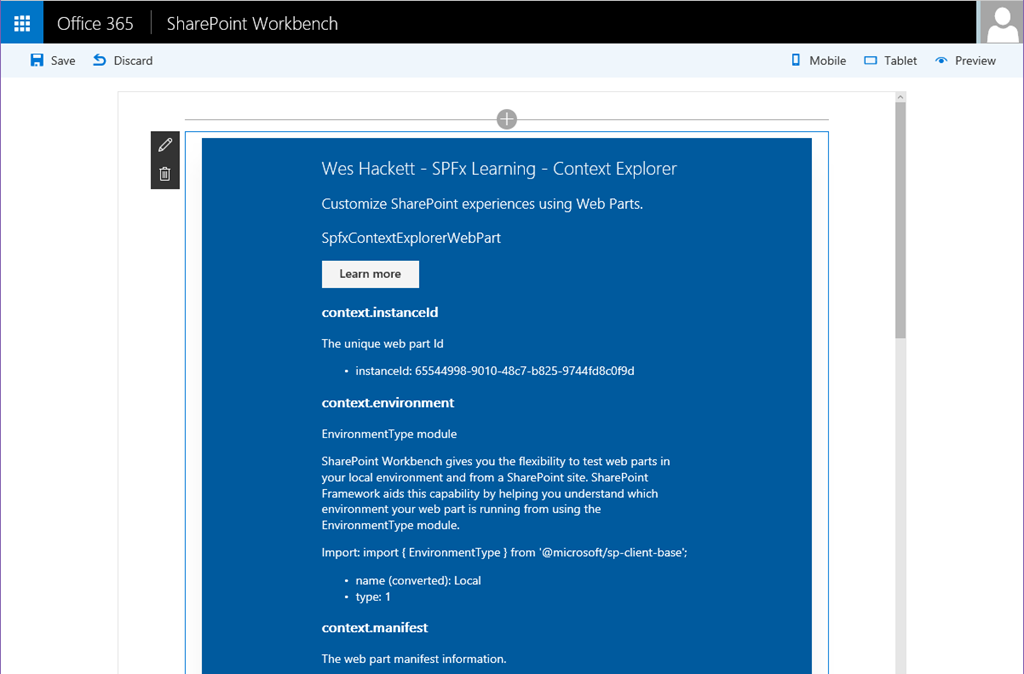

by Wes Hackett | Aug 27, 2016 | Development, Event, Featured, FutureOfSharePoint, Office365, SharePoint, SharePoint, SPFx, VS Code

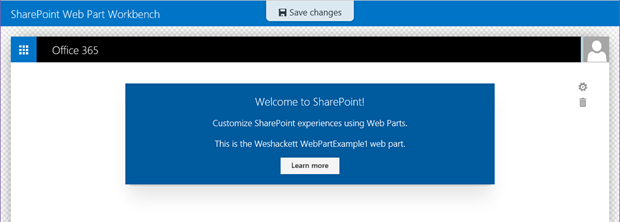

The new SharePoint Framework developer preview is available now and you can check out how to get started over in the Microsoft GitHub repo here: https://github.com/SharePoint/sp-dev-docs/wiki Follow through the setup and tutorials if you’re new to the SPFx and how it...

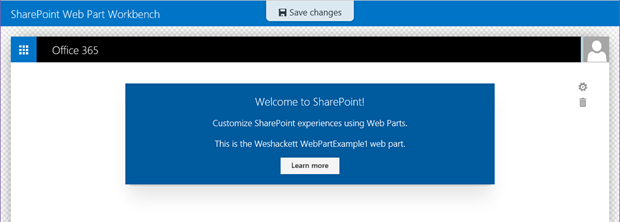

by Wes Hackett | Jul 8, 2016 | Development, Featured, FutureOfSharePoint, Office365, SharePoint, SharePoint, SPFx, Visual Studio

Microsoft announced the new SharePoint Framework at the Future of SharePoint event on May 4th 2016. You can read about the full announcement here: https://blogs.office.com/2016/05/04/the-sharepoint-framework-an-open-and-connected-platform/ Dan Kogan, principal group...

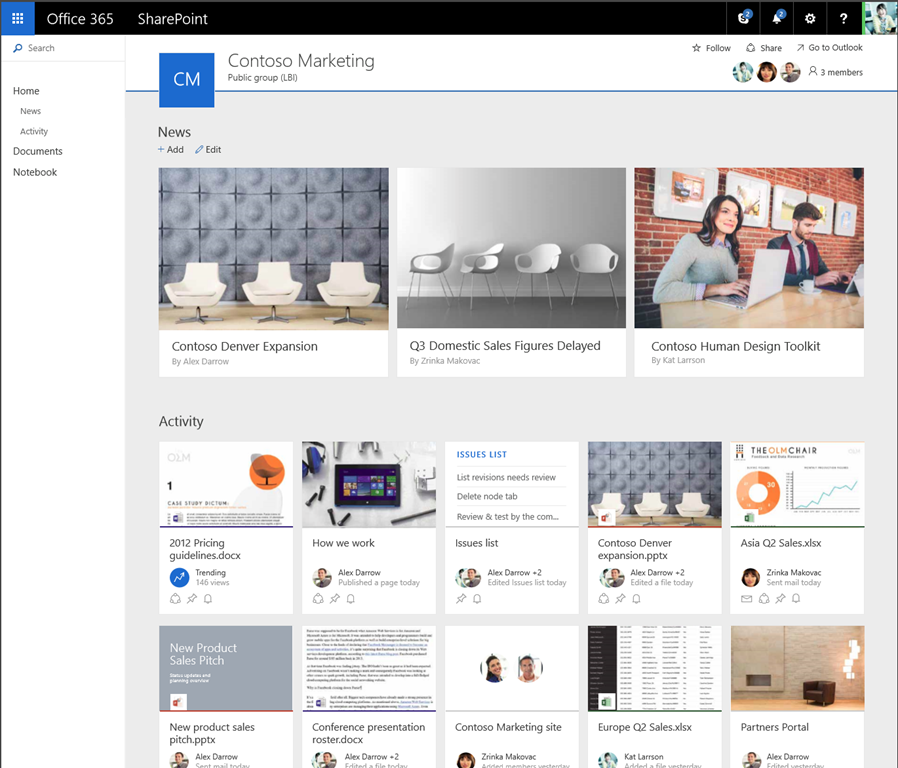

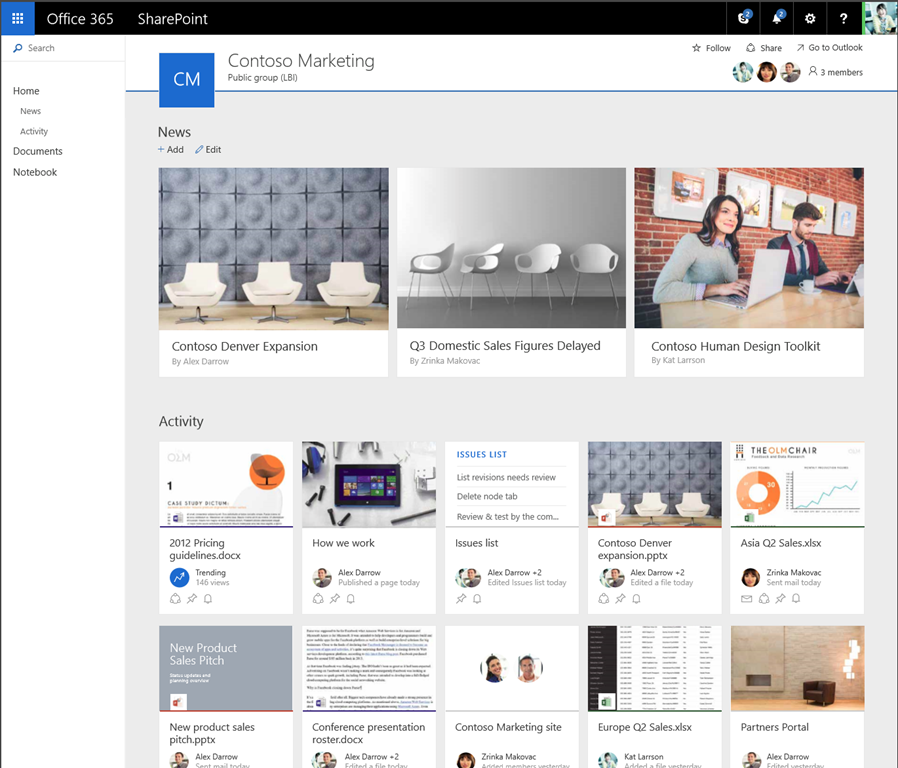

by Wes Hackett | May 4, 2016 | Delve, Development, Event, Featured, MVP, Office Online, Office365, OneDrive, SharePoint, SharePoint, Uncategorized, Yammer

Today Microsoft hosted a Future of SharePoint event, sharing publically for the first time what the SharePoint roadmap has to offer in 2016 and beyond. It did not disappoint. The event placed SharePoint and OneDrive’s soon-to-be-released simple user experience and...

by Wes Hackett | Oct 1, 2015 | Development, Featured, MVP, Office365, SharePoint, Sharepoint 2013

I’m happy to announce that I’ve been awarded Microsoft MVP 2015 for SharePoint Server. This is my third year as a MVP and it continues to be an amazing privilege to be recognised for my continued contributions. October 1st is one of the those days like any other until...

by Wes Hackett | Sep 12, 2015 | AddIn365, Apps, Apps for Office, Azure, Development, Featured, Office365, Sharepoint 2013

Office 365 presents an opportunity to meet more business objectives than ever before with an ever expanding set of services. However, out-dated attitudes and practices towards implementation of the Office 365 platform make it difficult for many organisations to...